About

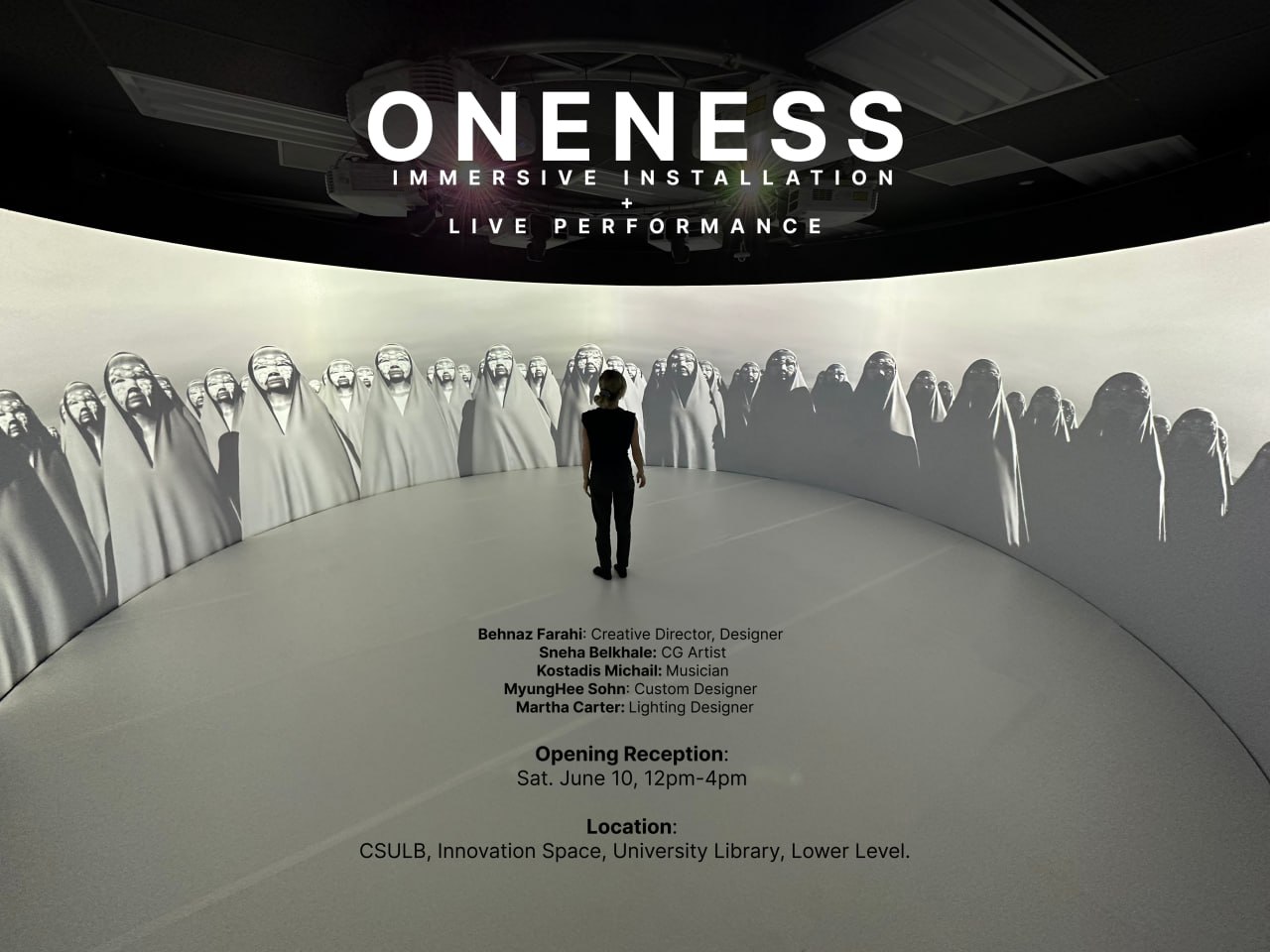

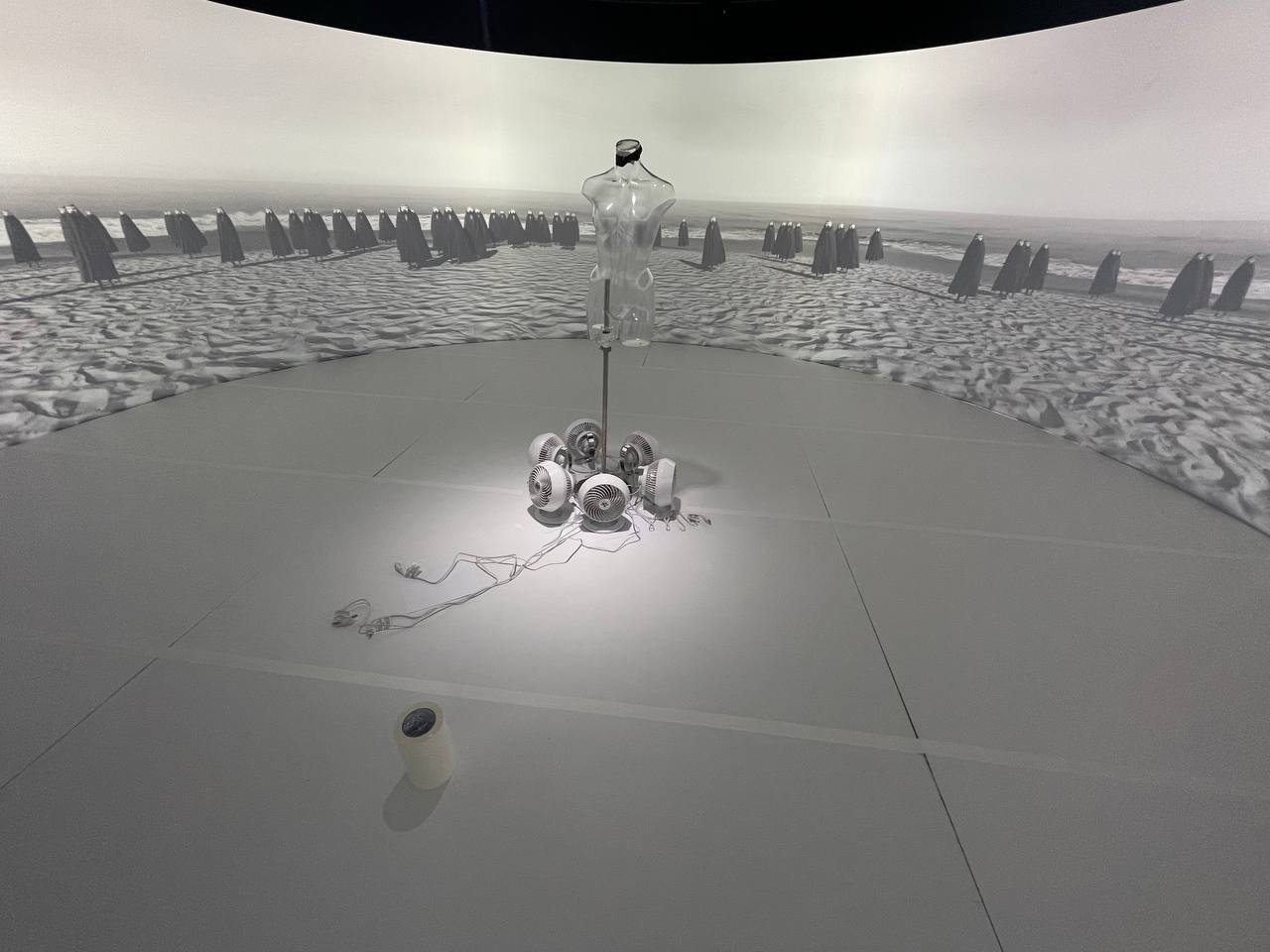

Oneness is a 360 immersive installation and performance, exhibited in the CSULB Innovation Space in collaboration with Behnaz Farahi. The piece envisions a future where an army of women wear robotic masks to communicate secret messages using AI-generated Morse code. The concept is brought to life by a physical performance, costume design, robotics, 3D graphics, and sound design, immersing you deeply into this powerful fictional environment.

I worked on the digital aspect of this project, creating the 360, 8K, 7 minute render to project on the dome during the installation. I'm really proud of the technical achievements during this project, specifically with the high resolution fabric simulations brought to Unreal Engine, compositing and rendering in 8K out of Unreal Engine.

And I'm also so happy to get the chance to work with Behnaz! I had been following her work for a years, she makes beautiful algorithmically oriented, robotic, 3D printed fashion, with a critical eye for design and story. A recent piece I saw from her was called Can the Subaltern Speak. Inspired by the Bandari women in Iran, Behnaz created a 3D printed mask with 17 mechanical blinking eyelashes. The idea is that the women wearing these masks are able to communicate with eachother through blinking their eyelashes in morse coded patterns. They develop their own language, empowered with their faces concealed.

This project blew my mind, first of all the intricacy of the 3d printed design, and then how it so critically brings technology into the social phenomena of the (male) gaze, and allows us to think of a future where this mask could be used as a protective element, a biological enhancement.

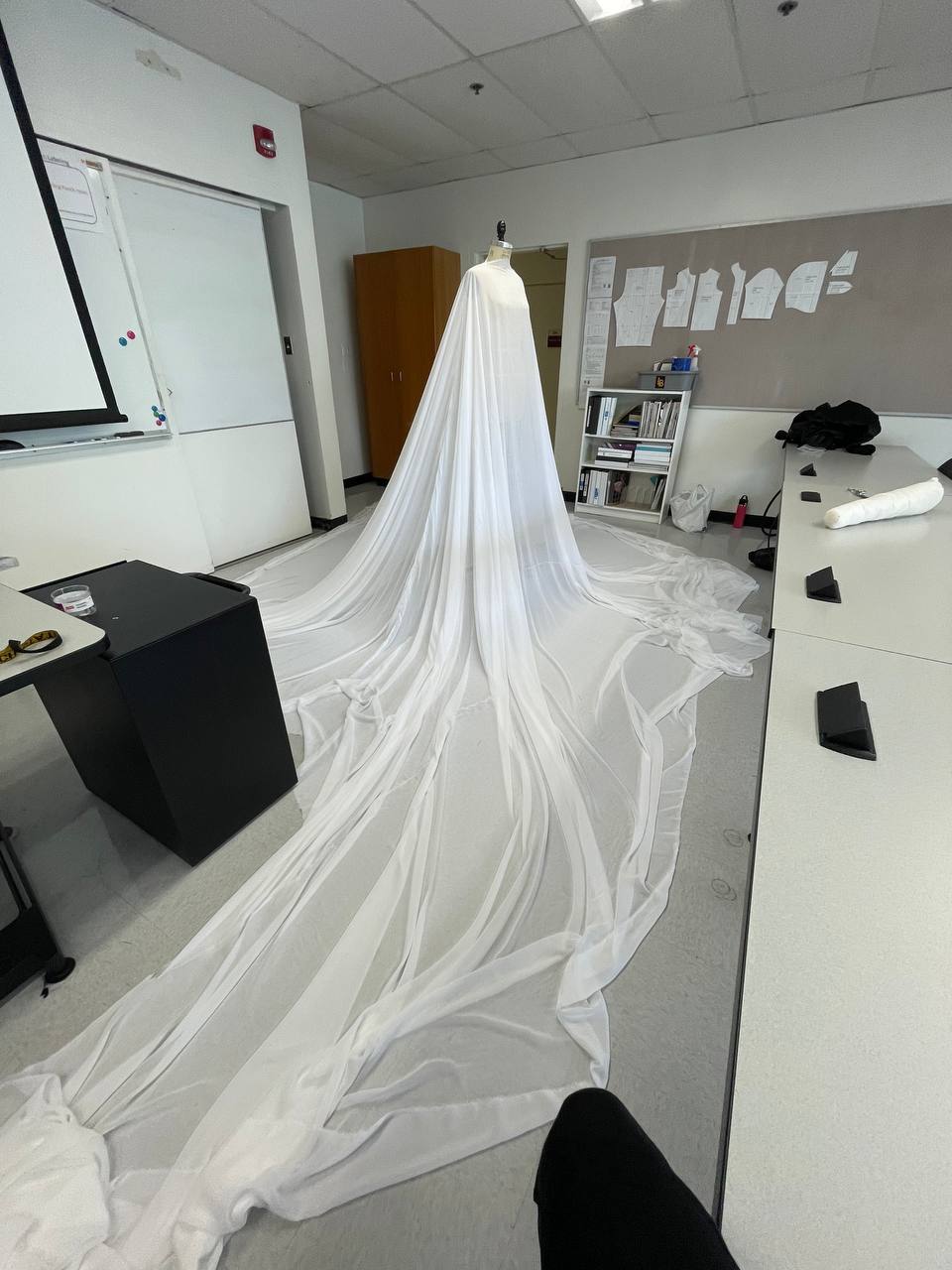

I reached out to Behnaz, asking her if she would like to collaborate, as I felt like we could be a harmonious blend of physical and digital creation. We chatted and I think we both could feel there was a good energy between us. She had access to a 360 immersive projection cylinder dome, and on some beautiful synergy moment we imagined how powerful it would be to digitally recreate her mask from "Can the Subaltern Speak", and create a scene with hundreds of women wearing the mask in chador, slowly walking towards you. And in the center of the physical space, there would be a real woman wearing the physical mask, and a long white chador that extended to the diameter of the room, with wind underneath creating ripples in her fabric. This was our fever dream giving us chills as we spoke it out loud, and I can't believe we made it happen, one step at a time.

We started this journey on the technical side, Behnaz sent me the 3D model for the mask, and I loaded it into Houdini to rig the 17 eyelashes, and then animate them in Unreal Engine. Of course this process reminded me of how Houdini can literally do anything.

Now that we had one mask digitally replicated, we could have hundreds! We started thinking about the aesthetics for the scene and how to shape it for the cylinder dome. We loved the idea of having hundreds of women surrounding the camera, and slowly walking towards it from all angles.

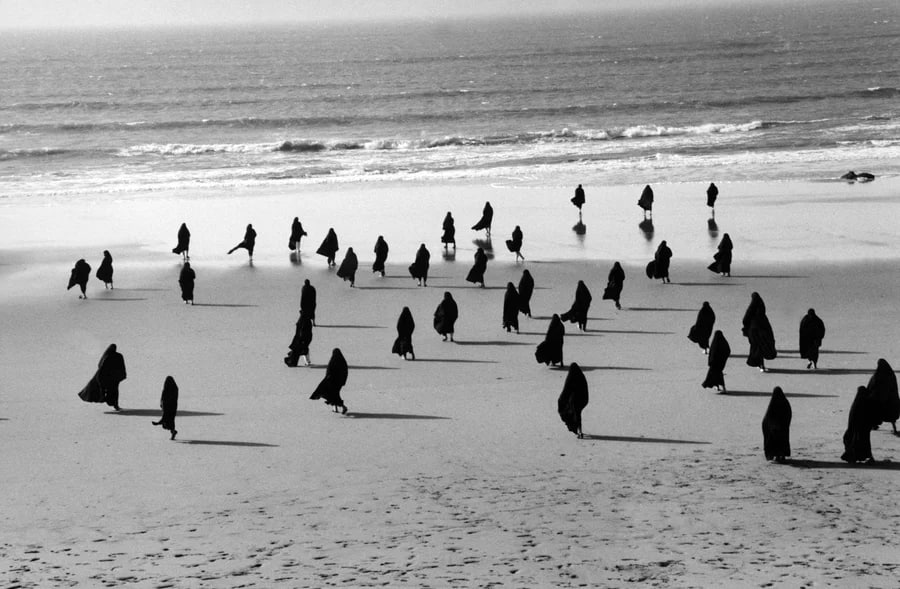

Behnaz has a strong love for the ocean, living just a few minutes from the beach and surfing every morning:) So she was keen on having an ocean in the background, for the surreal feeling of the women emerging from it.

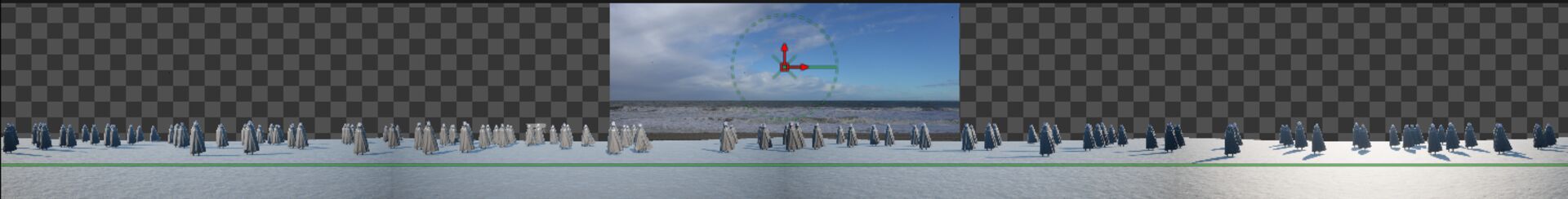

On the technical side in Unreal, this could be implemented by mirroring one 4K video, however we quickly realized that mirroring places a large aesthetic restriction and creates various glitches in the shadows we would have to work around. This meant that we had to go all the way, create the full scene in Unreal Engine, and render all 360 degrees. Although this meant having a super heavy scene in Unreal Engine, I was happy to work towards this way because it would lead to freedom in aesthetic choices later. For example, now we could have lighting that affected each character differently for a dynamic and dramatic scene.

This also posed the challenge of how could to render this 8K footage out of Unreal on my little laptop with NVIDIA RTX2060! I downloaded some plugins that are meant for rendering out 360 video from Unreal Engine, but they simply ran out of memory trying to render the 8K that I needed. I had to build my own 360 renderer, essentially making a rig with four 90 FOV cameras placed at 90 degree angles. I rendered out the sequence individually from each camera, using a post processing material to do the cylinder projection warping (ask me about an interesting moment using ChatGPT to solve the projection mapping equation myself), then stitching each the four images together using FFMPEG.

It was quite a pipeline to figure out! But at the end of it, I was able to get an 8K video for a 360 projection rendered from my bb laptop.

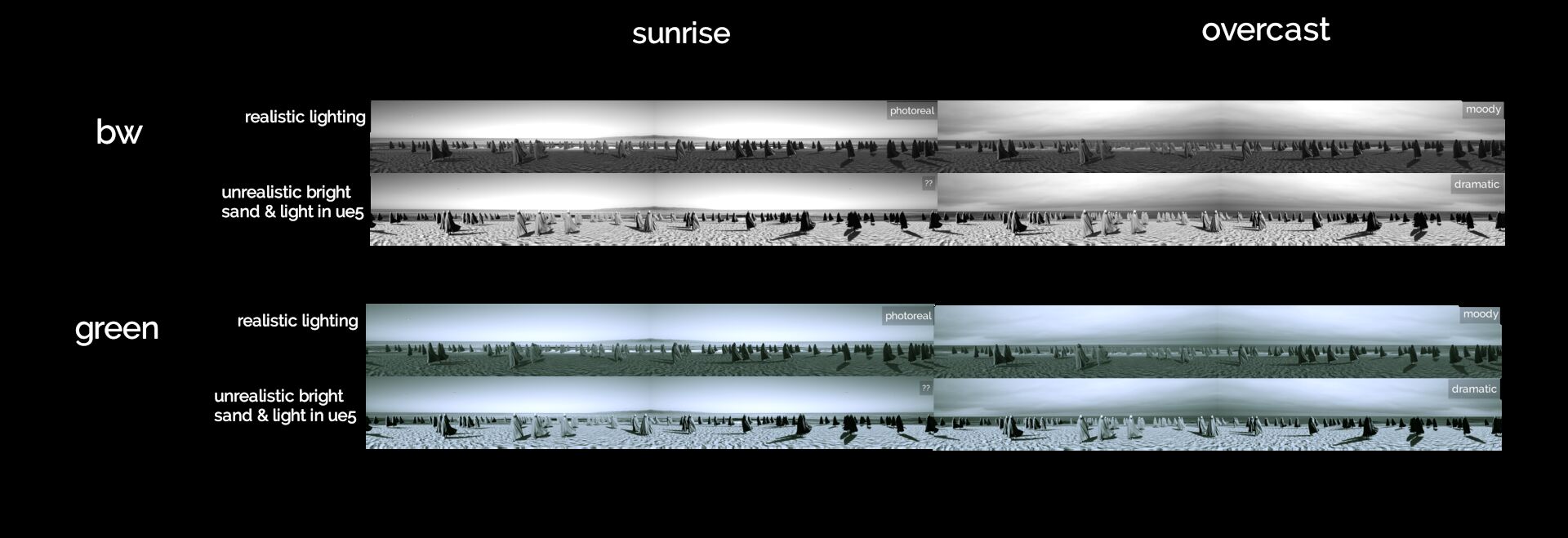

Behnaz took some footage of the ocean with a wide lens, and I played with the lighting in Unreal Engine and compositing in DaVinci to match. We went back and forth with each other testing the different combinations, but eventually we converged to our love for black and white, moody and fog:)

The character AI for this piece was quite simple, as the characters had two motions, walk to the camera, speak to each other, and blink. The part that probably took me the longest was getting the cloth simulation to play nicely with all these states! I did a pretty deep dive into cloth simulation for this project, to solve the challenge of hundreds of simultaneous cloth simulations rendering real-time. I played a bit with the simulation tools in Unreal Engine but they were much to hard to control or get the level of detail I wanted. I decided to go with vellum in HoudiniFX, and exporting it out to Unreal using Vertex Animation Textures (VAT). There was a lot of hackery needed to be able to blend between two VATs (as I needed a walk and idle cloth state), as well as apply a bone transform for the head rotations in conjunction with the simulation.

However once this was solved, it's just one shader lookup per frame for the intricacy of a Houdini Vellum cloth simulation! And it seemed like I could not even hit a rendering bottleneck in Unreal Engine with 200+ characters!

We added some wind, offsets per character, slowed down the cloth simulation speed, and the result was powerful.

The digital side was coming together! With feedback and suggestions from Behnaz along the way, I was really happy in the direction it was going. Since I was making my way to Los Angeles, Behnaz and I decided to have a little 24 hour hackathon together. I was so excited to meet in person! We spent the day in the innovation space at CSULB, testing out different color corrections for the visual, playing with fabric to cover the carpet for the exhibition, getting to know each other, seeing the mask in real life (!), skating and biking around venice beach, looking at her 3D printed material experiments in her studio, and a sweet salmon & wine dinner :) In the morning we even got up at 7AM to catch some footage of the ocean in overcast (which is the footage we used in the final video).

The next week, we also went to the fashion district in Los Angeles, to see what kind of options we had for fabric for the chador that should span the width of the space. I didn't know but apparently most of stores in the area are run by Iranians, Behnaz was speaking to them in Farsi, and it was really fun for me to watch the interactions, everything became louder and more animated, I felt like we had entered a portal escaping America.

After this, I was off to New York. Behnaz spent the next few weeks sending me updates on the physical part of the installation, such as printing a new mask in white, the large white chador, working on the fan system to lift the chador like waves, and a new white flooring. Everything she does in the physical realm, to me is so thoughtfully executed and stunning.

We also brought my friend and musician from Athens, Kostadis into the project to work on the sound design. This was a very fun mixing of my worlds, as I had met Kostadis covered in sparkles at a rave the previous year, I also worked on a music video for one of his tracks Emerald. Of course he is an amazing musician and really it was such a smooth collaboration, he knew exactly how to bring the piece to life, adding elements we could never have imagined (listen for the shrimp sounds:).

The show was on June 10th, I wish that I could have been there in person to witness all the physical elements that came together. Behnaz said that it was a success, many people questioning whether the 360 projection was real footage :) She got a videographer at the event so I will update this article with the footage once I get it!

Overall, I'm so proud of us for making this dream a reality, I think we made something so unique and powerful, from the heart.